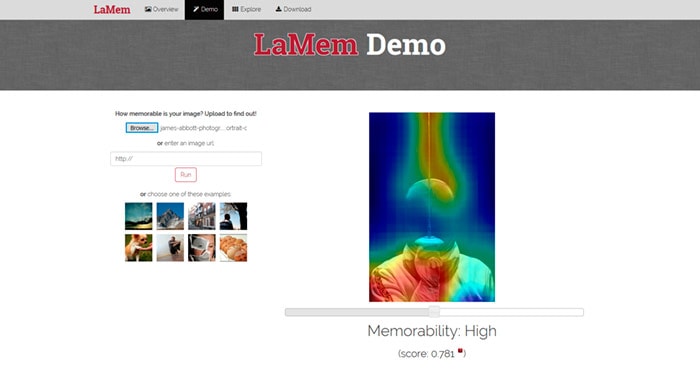

Large-scale Image Memorability, or LaMem, is an online algorithym that aims to tell you how memorable your photos are. I’ve tested it on several of my own images and the results were surprising, but interesting nonetheless.

The algoithym is claimed by the developers to have a near human consistency of 0.68. But this will of course exclude emotion, personal preference and knowledge of what’s photographically interesting. Follow the link below to try it with your own images.

To try LaMem for yourself visit http://memorability.csail.mit.edu/demo.html

LaMem appearss to prefer large clearly defined subjects. Images that are popular in reality can often score poorly with LaMem, so you have to be judge of how accurate it really is. That said, it’s still interesting to see how your images score.

This is how the developers describe LaMem

Progress in estimating visual memorability has been limited by the small scale and lack of variety of benchmark data. Here we introduce a novel experimental procedure to objectively measure human memory. This allowed us to build LaMem – the largest annotated image memorability dataset to date containing 60,000 images from diverse sources.

Reliability

Using Convolutional Neural Networks, we show that fine-tuned deep features outperform all other features by a large margin. It reaches a rank correlation of 0.64 and near human consistency of 0.68. Analysis of the responses of the high-level CNN layers shows which objects and regions are positively, and negatively, correlated with memorability. This allowed us to create memorability maps for each image and provide a concrete method to perform image memorability manipulation.

This work demonstrates that one can now robustly estimate the memorability of images from many different classes, positioning memorability and deep memorability features as prime candidates to estimate the utility of information for cognitive systems.